Data migration, the process of transferring data from one system to another, is a critical undertaking for modern organizations. However, the movement of data across networks introduces significant security vulnerabilities. Securing data in transit during migration is paramount to protecting sensitive information from unauthorized access, breaches, and potential regulatory non-compliance. This guide delves into the multifaceted aspects of securing data during this process, providing a structured approach to mitigate risks and ensure data integrity.

This discussion will explore a range of security measures, from encryption protocols and secure transfer methods to network segmentation and compliance considerations. We will dissect the risks associated with data in transit, analyze the efficacy of different security solutions, and provide actionable insights to safeguard data throughout the migration lifecycle. The goal is to equip readers with the knowledge and tools necessary to conduct secure and compliant data migrations.

Understanding Data in Transit and Migration

Data migration projects involve the movement of data from one system, storage location, or format to another. A critical aspect of this process is securing the data while it is “in transit,” meaning the data is actively moving between endpoints. This necessitates a deep understanding of the threats, vulnerabilities, and security best practices required to protect sensitive information during this vulnerable phase.

Defining Data in Transit within Data Migration

Data in transit, within the context of a data migration, refers to data that is actively being moved from a source location to a destination. This includes data moving across networks, between servers, or even within a single system during the migration process. It encompasses the period from when the data leaves its original storage location until it arrives at its new destination and is considered safely at rest.

Risks of Unsecured Data During Migration

Unsecured data in transit is highly susceptible to various threats. This vulnerability arises from the inherent exposure of data during its movement, increasing the potential for interception, modification, or unauthorized access.

- Data Breach: Unauthorized access to and exfiltration of sensitive information, leading to significant financial, reputational, and legal repercussions. Examples include the theft of customer data, financial records, or intellectual property.

- Data Modification: Alteration of data during transit, potentially corrupting the migrated data and leading to incorrect analysis, faulty decision-making, and loss of data integrity. This can involve the insertion of malicious code or manipulation of financial transactions.

- Data Loss: Interruption of the data transfer process resulting in the loss of data, either partially or entirely. This can be caused by network failures, system crashes, or malicious attacks.

- Compliance Violations: Failure to comply with data privacy regulations (e.g., GDPR, CCPA) can lead to significant fines and legal action. Migration projects often handle personal data, making compliance a critical concern.

- Reputational Damage: A data breach or data loss can severely damage an organization’s reputation, eroding customer trust and potentially impacting business relationships.

Common Attack Vectors Targeting Data in Transit

Attackers employ various methods to compromise data in transit. Understanding these attack vectors is crucial for implementing effective security measures.

- Man-in-the-Middle (MitM) Attacks: Attackers intercept and potentially alter communication between the source and destination, often by impersonating one or both parties. This can be achieved through techniques like ARP poisoning or DNS spoofing.

- Eavesdropping: Attackers passively monitor network traffic to capture sensitive data as it flows across the network. This can be accomplished using network sniffing tools.

- Malware Infections: Malicious software can be introduced into the source or destination systems to steal data or intercept data in transit. This can include keyloggers, trojans, and other forms of malware.

- Unsecured Protocols: Using protocols like HTTP or FTP without encryption leaves data vulnerable to interception. These protocols transmit data in plain text, making it easily readable by attackers.

- Insider Threats: Malicious or negligent insiders with access to the migration process can intentionally or unintentionally compromise data security. This includes employees with privileged access who may steal or leak sensitive information.

Distinguishing Data at Rest, in Transit, and in Use

Data security considerations vary significantly depending on the data’s state: at rest, in transit, or in use. Each state presents unique security challenges and requires different protective measures.

- Data at Rest: Data stored in a static state on storage devices such as hard drives, databases, or cloud storage. Security focuses on access controls, encryption, and physical security.

- Data in Transit: Data actively moving between locations or systems, as discussed above. Security emphasizes encryption, secure protocols, and monitoring.

- Data in Use: Data being processed in memory by a computer or application. Security considerations include access controls, memory protection, and secure coding practices.

The following table summarizes the key differences in security considerations for each data state:

| Data State | Description | Key Security Concerns | Security Measures |

|---|---|---|---|

| Data at Rest | Data stored on storage devices. | Unauthorized access, physical security, data breaches. | Encryption, access controls, data masking, physical security measures. |

| Data in Transit | Data moving between locations or systems. | Eavesdropping, MitM attacks, data breaches. | Encryption (TLS/SSL), secure protocols, VPNs, network segmentation. |

| Data in Use | Data being processed in memory. | Malware injection, buffer overflows, memory leaks. | Access controls, memory protection, secure coding practices, input validation. |

Choosing the Right Encryption Methods

Selecting the appropriate encryption methods is paramount for safeguarding data during migration. The choice of encryption directly impacts the confidentiality, integrity, and availability of the data throughout the transfer process. This section delves into the intricacies of encryption algorithms, highlighting their strengths, weaknesses, and practical applications in a data migration context. A careful consideration of these aspects ensures the selected method aligns with the specific security requirements and performance constraints of the migration.

Encryption Algorithms for Data Migration

Several encryption algorithms are suitable for securing data during migration, each employing different mathematical principles to transform data into an unreadable format. The selection of an algorithm depends on factors such as the sensitivity of the data, the required level of security, and the performance characteristics of the migration environment.

- Advanced Encryption Standard (AES): AES is a symmetric block cipher widely adopted for its robustness and efficiency. It operates on fixed-size data blocks (typically 128 bits) using a secret key to encrypt and decrypt the data. AES is available in different key lengths (128, 192, and 256 bits), offering varying levels of security. AES is generally favored for its speed and strong security, making it suitable for encrypting large volumes of data during migration.

- Rivest-Shamir-Adleman (RSA): RSA is an asymmetric encryption algorithm that relies on a pair of keys: a public key for encryption and a private key for decryption. The public key can be freely distributed, while the private key must be kept secret. RSA is commonly used for key exchange and digital signatures, as well as for encrypting smaller amounts of data. RSA’s computational complexity makes it less efficient for encrypting large datasets compared to symmetric algorithms.

- Triple DES (3DES): 3DES is a symmetric-key algorithm derived from the Data Encryption Standard (DES). It encrypts data three times using DES with either two or three different keys. 3DES provides a higher level of security than DES but is slower due to its multiple encryption rounds. While still used in some legacy systems, it is generally considered less secure than AES.

- Secure Hash Algorithm (SHA): While not an encryption algorithm, SHA is a cryptographic hash function that generates a unique fixed-size “fingerprint” of a data set. It’s used to verify data integrity. SHA ensures that the data hasn’t been tampered with during migration. The SHA family includes SHA-256 and SHA-384, offering different levels of security.

Symmetric vs. Asymmetric Encryption

The choice between symmetric and asymmetric encryption depends on the specific requirements of the data migration process. Understanding the fundamental differences between these two approaches is crucial for making an informed decision.

- Symmetric Encryption: Symmetric encryption uses the same secret key for both encryption and decryption. This method is generally faster and more efficient than asymmetric encryption, making it suitable for encrypting large volumes of data. However, the secure distribution of the secret key to all parties involved in the migration is a significant challenge. Examples include AES and 3DES.

- Asymmetric Encryption: Asymmetric encryption uses a pair of mathematically related keys: a public key and a private key. Data encrypted with the public key can only be decrypted with the corresponding private key, and vice versa. This approach simplifies key management, as the public key can be freely distributed. However, asymmetric encryption is typically slower than symmetric encryption, making it less suitable for encrypting large datasets directly.

RSA is a common example.

Comparison of Encryption Methods

The following table provides a comparative analysis of different encryption methods, outlining their pros and cons. The table’s structure is designed for responsiveness, adapting to various screen sizes to ensure readability.

| Encryption Method | Type | Pros | Cons |

|---|---|---|---|

| AES | Symmetric | Fast, robust, widely supported, various key lengths. | Key distribution needs secure method. |

| RSA | Asymmetric | Simplified key management (public key), digital signatures. | Slower than symmetric, computationally intensive, suitable for smaller data. |

| 3DES | Symmetric | Stronger than DES. | Slower than AES, less secure than AES, susceptible to certain attacks. |

| SHA | Hashing | Verifies data integrity, widely used. | Does not encrypt data (only generates a hash), vulnerable to collision attacks if used improperly. |

Selecting the Appropriate Encryption Method

The selection of the most appropriate encryption method for data migration involves a careful assessment of several factors, including the sensitivity of the data, the performance requirements, and the available resources. The following guide Artikels a process for making this decision.

- Assess Data Sensitivity: Determine the level of confidentiality required for the data. Highly sensitive data, such as financial records or personally identifiable information (PII), necessitates robust encryption algorithms like AES with a strong key length. Data with lower sensitivity might be adequately protected with a less computationally intensive method.

- Evaluate Performance Needs: Consider the volume of data being migrated and the available bandwidth. Symmetric encryption methods, such as AES, are generally faster and more efficient for large data transfers. If performance is a critical factor, prioritize symmetric encryption.

- Address Key Management: Plan for the secure management and distribution of encryption keys. For symmetric encryption, a secure key exchange mechanism is essential. Asymmetric encryption simplifies key management but can be slower.

- Consider Compliance Requirements: Adhere to relevant industry regulations and compliance standards, such as HIPAA or GDPR, which may dictate specific encryption requirements.

- Test and Validate: Thoroughly test the chosen encryption method in a controlled environment before deploying it in a production migration. Verify that the encryption and decryption processes function correctly and that performance meets expectations.

Implementing Secure Protocols (TLS/SSL)

Securing data in transit during migration necessitates the implementation of robust protocols to safeguard sensitive information from unauthorized access. Transport Layer Security (TLS) and its predecessor, Secure Sockets Layer (SSL), are cryptographic protocols designed to provide secure communication over a network. These protocols ensure data confidentiality, integrity, and authentication, crucial elements for a secure migration process.

How Transport Layer Security (TLS) and Secure Sockets Layer (SSL) Protect Data in Transit

TLS and SSL function by establishing an encrypted connection between a client (e.g., a source server) and a server (e.g., a destination server). This encryption protects the data transmitted between the two parties from eavesdropping and tampering. The process generally involves several key steps:

- Handshake: The client and server negotiate the security parameters, including the TLS/SSL version, cipher suite, and authentication methods to be used. The client initiates the handshake by sending a “Client Hello” message, and the server responds with a “Server Hello” message.

- Authentication: The server presents its digital certificate to the client, which contains the server’s public key and is signed by a Certificate Authority (CA). The client verifies the certificate’s validity by checking the CA’s signature and ensuring the certificate has not been revoked. This step authenticates the server’s identity.

- Key Exchange: The client and server exchange cryptographic keys to establish a shared secret key. This key is used to encrypt and decrypt the data transmitted during the session. The specific key exchange method depends on the chosen cipher suite. Methods like Diffie-Hellman (DH) or RSA are commonly used.

- Encrypted Data Transfer: Once the secure connection is established, all data transmitted between the client and server is encrypted using the shared secret key. This encryption ensures data confidentiality. Integrity checks, such as using Message Authentication Codes (MACs), are performed to detect any tampering with the data during transit.

The effectiveness of TLS/SSL hinges on the strength of the chosen cipher suites. Strong cipher suites employ robust encryption algorithms and key lengths to resist various cryptographic attacks. The use of modern TLS versions (TLS 1.2 or TLS 1.3) is highly recommended, as they offer improved security and performance compared to older versions like SSL 3.0 or TLS 1.0.

Step-by-step Configuration of TLS/SSL for Secure Data Transfer During Migration

Configuring TLS/SSL for secure data transfer requires a series of well-defined steps, varying slightly depending on the specific operating systems, applications, and migration tools employed. However, the core principles remain consistent. This section provides a general guide.

- Obtain an SSL/TLS Certificate: Acquire a digital certificate from a trusted Certificate Authority (CA). This certificate verifies the identity of the server and allows clients to trust the connection. Alternatively, for internal testing or non-public deployments, a self-signed certificate can be generated, though it is not recommended for production environments due to the lack of trust from external clients.

- Install the Certificate on the Server: Install the certificate on the server that will be handling the data transfer. This usually involves importing the certificate and its associated private key into the server’s certificate store. The specific process depends on the server software (e.g., Apache, Nginx, Microsoft IIS).

- Configure the Server to Use TLS/SSL: Configure the server software to use the installed certificate and to listen for secure connections on a specific port (typically port 443 for HTTPS). This involves modifying the server’s configuration files to specify the certificate file, the private key file, and the desired cipher suites.

- Configure the Client to Connect Securely: Configure the client (e.g., the migration tool or script) to connect to the server using HTTPS. This typically involves specifying the server’s hostname and port (443) and ensuring that the client trusts the server’s certificate. The client might need to be configured to verify the certificate’s validity.

- Test the Connection: After configuring both the server and the client, test the secure connection to ensure that data is being transferred securely. This can involve using a web browser to access the server via HTTPS or using a command-line tool like `openssl s_client` to verify the TLS/SSL handshake. Analyze the connection details, checking the cipher suite and the certificate details.

- Implement Certificate Pinning (Optional): For enhanced security, consider implementing certificate pinning on the client-side. Certificate pinning involves hardcoding the expected certificate or public key into the client application. This prevents man-in-the-middle attacks if the CA is compromised or if a rogue certificate is used.

Example (Apache Configuration):To configure Apache to use TLS/SSL, you would typically modify the Apache configuration file (e.g., `httpd.conf` or `apache2.conf`) to include the following directives within a `

- `SSLEngine on` enables SSL/TLS.

- `SSLCertificateFile` specifies the path to the SSL certificate file.

- `SSLCertificateKeyFile` specifies the path to the private key file.

- `SSLCACertificateFile` specifies the path to the CA certificate (if applicable).

After modifying the configuration file, restart the Apache service for the changes to take effect.

Best Practices for Managing and Renewing SSL/TLS Certificates

Proper management and renewal of SSL/TLS certificates are crucial for maintaining secure data transfer. Certificates have an expiration date, and failure to renew them can lead to connection failures and security vulnerabilities. Adhering to best practices ensures continuous security.

- Monitor Certificate Expiration Dates: Regularly monitor the expiration dates of all SSL/TLS certificates. Implement automated alerts or notifications to provide ample time for renewal. Tools like online certificate checkers or monitoring dashboards can assist in this process.

- Automate Certificate Renewal: Automate the certificate renewal process whenever possible. Services like Let’s Encrypt provide free certificates and offer automated renewal options. This minimizes manual intervention and reduces the risk of human error.

- Use Certificate Management Tools: Utilize certificate management tools to streamline the process of obtaining, installing, and renewing certificates. These tools can automate many of the tasks involved in certificate management, such as generating Certificate Signing Requests (CSRs) and installing certificates on servers.

- Implement a Certificate Inventory: Maintain a comprehensive inventory of all SSL/TLS certificates, including their expiration dates, issuing CA, and the servers on which they are installed. This inventory should be regularly updated to reflect any changes.

- Revoke Compromised Certificates: If a certificate is compromised, immediately revoke it to prevent unauthorized use. Contact the CA to revoke the certificate and then generate and install a new certificate.

- Rotate Certificates Regularly: Consider rotating certificates periodically, even before they expire. This reduces the potential impact of a compromised certificate. Implement this rotation strategy based on the risk assessment and security requirements of the data migration.

- Store Private Keys Securely: Protect the private keys associated with SSL/TLS certificates. Store them in a secure location, such as a hardware security module (HSM) or a password-protected key store. Limit access to private keys to authorized personnel only.

Troubleshooting Common TLS/SSL Connection Issues During Migration

Encountering TLS/SSL connection issues during a data migration is common. These issues can stem from various sources, including misconfigurations, certificate problems, or network connectivity issues. Identifying and resolving these problems requires a systematic approach.

- Certificate Errors: Certificate errors are a frequent cause of connection problems. Common errors include:

- Expired Certificate: Verify that the certificate has not expired. Check the certificate’s expiration date and renew it if necessary.

- Invalid Certificate: Ensure the certificate is valid for the server’s hostname or IP address. Check the certificate’s Subject Alternative Names (SANs) to confirm that the correct names are listed.

- Untrusted Certificate: Verify that the client trusts the certificate’s issuing CA. If the CA is not trusted by default, install the CA’s root certificate on the client.

- Certificate Revocation: Check if the certificate has been revoked by the CA. This can be verified through the Online Certificate Status Protocol (OCSP) or Certificate Revocation Lists (CRLs).

- Cipher Suite Mismatches: Cipher suite mismatches can occur when the client and server do not support a common cipher suite. This can happen when the server’s configuration restricts the available cipher suites or when the client’s software is outdated.

- Check Server Configuration: Verify that the server is configured to support a range of modern and secure cipher suites. Avoid using outdated or weak cipher suites.

- Update Client Software: Ensure that the client software is up to date and supports modern cipher suites.

- Network Connectivity Issues: Network connectivity issues can prevent a successful TLS/SSL connection.

- Firewall Rules: Check firewall rules on both the client and server to ensure that traffic on port 443 (or the configured port for HTTPS) is allowed.

- DNS Resolution: Verify that the client can resolve the server’s hostname to its correct IP address.

- Network Latency: High network latency can sometimes cause connection timeouts. Investigate network performance issues if necessary.

- Incorrect Server Configuration: Incorrect server configurations can lead to TLS/SSL connection problems.

- Certificate Installation: Verify that the certificate and private key are correctly installed on the server and that the server is configured to use them.

- Configuration Errors: Review the server’s configuration files for any errors related to TLS/SSL settings.

- Server Restart: After making any configuration changes, restart the server to apply the changes.

- Client-Side Issues: Problems on the client-side can also cause TLS/SSL connection issues.

- Browser Settings: Check the client’s browser settings to ensure that TLS/SSL is enabled and that the browser trusts the server’s certificate.

- Proxy Settings: If a proxy server is being used, ensure that the proxy is configured to handle HTTPS traffic correctly.

- Using Diagnostic Tools: Utilize diagnostic tools to help troubleshoot TLS/SSL connection issues.

- `openssl s_client` Command: Use the `openssl s_client` command-line tool to test the TLS/SSL connection and identify any errors. This tool provides detailed information about the handshake process, certificate details, and cipher suites.

- Web Browser Developer Tools: Use the web browser’s developer tools to inspect the network traffic and identify any TLS/SSL-related errors.

- Network Analyzers: Use network analyzers, such as Wireshark, to capture and analyze network traffic to identify any TLS/SSL-related problems.

Using VPNs for Secure Data Transfer

Virtual Private Networks (VPNs) provide a crucial layer of security during data migration by establishing encrypted connections over public networks. This shields data from eavesdropping and tampering, ensuring confidentiality and integrity throughout the transfer process. VPNs are particularly valuable when migrating data across geographically dispersed locations or when utilizing public cloud services.

Creating Secure Tunnels with VPNs

VPNs function by creating an encrypted tunnel between two points, effectively encapsulating the data within a secure “pipe.” This tunnel protects the data from interception as it travels across the internet or other untrusted networks. The encryption process involves using cryptographic algorithms to transform the data into an unreadable format, which can only be deciphered by the intended recipient.The fundamental process involves several key steps:* The VPN client, usually software installed on a device, initiates a connection to the VPN server.

- Authentication occurs, verifying the identity of the client and server, ensuring only authorized parties can establish the connection. This commonly involves usernames, passwords, certificates, or multi-factor authentication.

- An encryption key is negotiated between the client and server. This key is used to encrypt and decrypt the data. Secure key exchange protocols are employed to ensure the key remains secret.

- All data transmitted between the client and server is then encrypted using the agreed-upon key. This process, often utilizing algorithms like AES (Advanced Encryption Standard), ensures the data’s confidentiality.

- The VPN server decrypts the data received from the client and forwards it to its destination. Conversely, it encrypts data sent back to the client.

The use of encryption ensures that even if the data is intercepted, it remains unreadable without the decryption key. This mechanism provides a robust defense against various threats, including man-in-the-middle attacks and data breaches.

Types of VPNs and Suitability for Data Migration

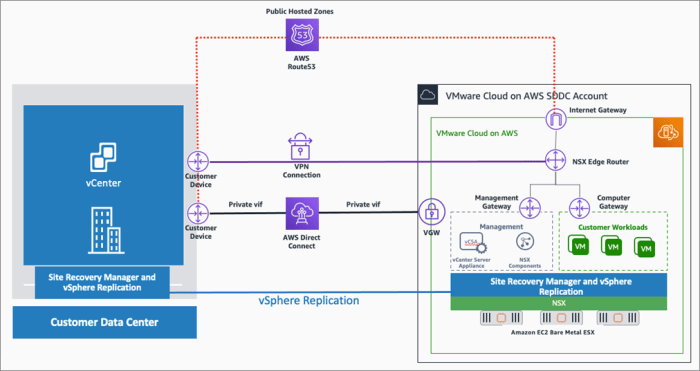

Different VPN types cater to various needs, and their suitability for data migration depends on the specific requirements of the migration project. The two primary categories are site-to-site VPNs and client-to-site VPNs.* Site-to-Site VPNs: These VPNs connect entire networks together, allowing secure communication between multiple sites. They are ideally suited for data migration between two or more physical locations, such as migrating data from an on-premises data center to a cloud environment or between different company offices.

Site-to-site VPNs typically involve dedicated hardware devices, such as VPN routers or firewalls, to manage the connection.

Example

A company with a data center in New York and a branch office in London could use a site-to-site VPN to securely migrate data between the two locations. The VPN would encrypt all traffic between the two networks, protecting the data from interception.

Client-to-Site VPNs

These VPNs allow individual users or devices to connect to a remote network securely. They are suitable for data migration where individual users need to access data remotely or when migrating data from a single device to a remote server. Client-to-site VPNs are often used by remote workers or when migrating data from a laptop or mobile device.

Example

An employee working remotely could use a client-to-site VPN to securely access and upload data to a cloud storage service. The VPN would encrypt all traffic between the employee’s device and the cloud service, protecting the data from eavesdropping.The choice between site-to-site and client-to-site VPNs depends on the migration scenario. Site-to-site VPNs are best for network-to-network migrations, while client-to-site VPNs are better suited for individual device or user access.

Setting Up a VPN for Data Migration with OpenVPN

OpenVPN is a widely-used open-source VPN software known for its flexibility and robust security features. Setting up OpenVPN involves several steps, including server configuration, client configuration, and certificate management. The following steps provide a simplified example of how to set up OpenVPN for a client-to-site VPN, suitable for migrating data from a single device.

1. Server Installation and Configuration

Install OpenVPN on the server (e.g., a cloud server or a dedicated VPN appliance). Configure the server with a private IP address, a subnet, and a port for incoming connections.

2. Certificate Authority (CA) Setup

Generate a Certificate Authority (CA) and create server and client certificates. This involves generating private and public keys for both the server and the client, ensuring secure authentication. The CA is responsible for signing the certificates, validating the authenticity of the server and clients.

3. Server Configuration File (server.conf)

Create a server configuration file specifying the VPN’s settings, including the network interface, the port, the protocol (UDP or TCP), the cipher, the authentication method (e.g., TLS), the client’s IP address range, and the paths to the server certificate, key, and the CA certificate. “` port 1194 proto udp dev tun ca ca.crt cert server.crt key server.key dh dh.pem server 10.8.0.0 255.255.255.0 ifconfig-pool-persist ipp.txt push “redirect-gateway def1 bypass-dhcp” push “dhcp-option DNS 8.8.8.8” push “dhcp-option DNS 8.8.4.4” keepalive 10 120 tls-auth ta.key 0 cipher AES-256-CBC user nobody group nogroup persist-key persist-tun status openvpn-status.log verb 3 “`

4. Client Configuration File (client.ovpn)

Create a client configuration file containing the client’s settings, including the server’s IP address or hostname, the port, the protocol, the cipher, the authentication method, and the paths to the client certificate, key, and the CA certificate. “` client dev tun proto udp remote your_server_ip 1194 resolv-retry infinite nobind persist-key persist-tun ca ca.crt cert client.crt key client.key tls-auth ta.key 1 cipher AES-256-CBC verb 3 “`

5. Client Installation and Configuration

Install the OpenVPN client software on the client device (e.g., a laptop). Import the client configuration file and the necessary certificates.

6. Connection and Testing

Initiate the VPN connection from the client. Verify that the connection is established successfully and that data can be transferred securely.The specific steps and configurations may vary depending on the operating system and the chosen VPN client. The example provided above illustrates the fundamental concepts.

Comparing Security Features of VPN Protocols

Different VPN protocols offer varying levels of security and performance. The choice of protocol depends on the specific security requirements, the network environment, and the performance needs.* IPSec (Internet Protocol Security): IPSec is a widely used protocol suite that provides robust security features, including authentication, encryption, and integrity checking. IPSec operates at the network layer (Layer 3) of the OSI model, protecting all traffic passing through the VPN tunnel.

It is often used in site-to-site VPNs and offers strong security but can be more complex to configure. IPSec is typically implemented with protocols like IKE (Internet Key Exchange) for key exchange and AH (Authentication Header) and ESP (Encapsulating Security Payload) for security services.

Example

IPSec is commonly used to secure VPN connections between routers in different corporate offices.

OpenVPN

OpenVPN is an open-source protocol that offers a high degree of flexibility and security. It supports both UDP and TCP protocols, allowing it to traverse firewalls more easily. OpenVPN utilizes SSL/TLS for encryption and authentication, providing strong security. It is generally considered easier to configure than IPSec, making it a popular choice for client-to-site VPNs. OpenVPN can also be configured with various ciphers and authentication methods, offering customizable security.

Example

OpenVPN is a popular choice for remote workers to securely connect to a company’s network.

WireGuard

WireGuard is a relatively new VPN protocol that aims to provide a faster and simpler VPN solution than older protocols. It utilizes modern cryptography and is designed for ease of use and high performance. WireGuard uses a streamlined key exchange process and a minimal codebase, making it more secure and less prone to vulnerabilities. However, WireGuard is still evolving, and its widespread adoption is still in progress.

Example

WireGuard can be used for high-speed data transfer between cloud servers or for securing IoT devices.The following table provides a comparative overview of the security features of the different VPN protocols.| Feature | IPSec | OpenVPN | WireGuard || :—————— | :————————————- | :————————————— | :—————————————- || Encryption | AES, 3DES, others | AES, Blowfish, others | ChaCha20, Poly1305 || Authentication | Pre-shared key, digital certificates | Digital certificates, username/password | Cryptokey routing || Key Exchange | IKE, others | SSL/TLS | Noise protocol || Protocol | IP | UDP, TCP | UDP || Performance | Moderate | Moderate | High || Complexity | High | Moderate | Low || Firewall Traversal | Can be challenging | Generally good | Generally good |Choosing the appropriate VPN protocol involves considering factors such as security requirements, performance needs, and ease of configuration.

For high security and flexibility, OpenVPN is a good choice. For high performance and simplicity, WireGuard is an emerging option. IPSec provides robust security and is suitable for site-to-site VPNs.

Securing Data Transfer with SFTP/SCP

Secure File Transfer Protocol (SFTP) and Secure Copy Protocol (SCP) provide robust mechanisms for securely transferring data during migration processes. These protocols utilize encryption to protect data confidentiality and integrity, mitigating the risks associated with unauthorized access or data tampering. Their adoption is critical in environments where sensitive information is being moved between systems, ensuring data security throughout the migration lifecycle.

Secure File Transfer Protocol (SFTP) and Secure Copy Protocol (SCP) Functionality

SFTP and SCP serve distinct yet related functions in secure data transfer. SFTP operates over SSH (Secure Shell), offering a more feature-rich and secure file transfer capability compared to traditional FTP (File Transfer Protocol). SCP, built on SSH, provides a simplified method for copying files between hosts.SFTP:

- SFTP, also known as SSH File Transfer Protocol, is a secure protocol that operates over an SSH connection. It provides a more comprehensive set of features compared to FTP, including directory manipulation, file permissions management, and resuming interrupted transfers.

- SFTP uses encryption to protect the data in transit, preventing eavesdropping and ensuring data integrity. It authenticates the client and server using SSH key-based authentication or passwords.

- SFTP’s design allows for more efficient transfer operations, especially over high-latency networks, due to its ability to resume interrupted transfers and handle file metadata securely.

SCP:

- SCP, Secure Copy Protocol, is a command-line utility that leverages SSH for secure file transfer. It simplifies copying files between local and remote systems or between two remote systems.

- SCP uses SSH encryption to encrypt the data during transfer, safeguarding the confidentiality of the data.

- SCP’s simplicity makes it easy to use, particularly for quick file transfers. However, it lacks some of the advanced features of SFTP, such as directory browsing and file permissions management.

Configuring SFTP/SCP Servers for Secure Data Migration

Configuring SFTP/SCP servers requires careful attention to security settings to ensure the confidentiality and integrity of data during migration. This involves setting up user accounts, managing authentication methods, and implementing access controls.Server Configuration Steps:

- Installation and Setup: Install an SFTP server (e.g., OpenSSH, vsftpd with TLS/SSL) or ensure SSH is enabled (which provides SCP functionality) on the target server. Verify the installation.

- User Account Creation: Create dedicated user accounts on the server specifically for data migration purposes. Assign strong passwords or, preferably, configure SSH key-based authentication for enhanced security.

- SSH Key-Based Authentication: Generate SSH key pairs (public and private keys) for the migration users. Distribute the public keys to the server and keep the private keys secure. This eliminates the need for password authentication, reducing the risk of brute-force attacks.

- SFTP Configuration: Configure the SFTP server settings to restrict access to specific directories and enable logging for auditing purposes.

- SCP Configuration: SCP functionality is generally enabled by default if SSH is running. However, it’s crucial to review the SSH configuration (e.g., `sshd_config`) to ensure appropriate access controls and security settings are in place.

- Firewall Rules: Configure firewall rules to allow incoming connections on the SFTP/SSH port (typically port 22) from authorized source IP addresses only. This limits the attack surface and prevents unauthorized access.

- Regular Updates: Keep the SFTP server and SSH software up to date with the latest security patches to address any known vulnerabilities.

Example (OpenSSH configuration):

To restrict SFTP access to a specific directory for a user named “migrationuser”, edit the `sshd_config` file (typically located at `/etc/ssh/sshd_config`) and add the following lines:

Match User migrationuser ChrootDirectory /path/to/migration/directory ForceCommand internal-sftp AllowTcpForwarding no X11Forwarding no

In this configuration, the `ChrootDirectory` restricts the user to a specific directory, `ForceCommand internal-sftp` forces the use of SFTP, and the other options disable TCP forwarding and X11 forwarding for added security.

Best Practices for SFTP/SCP Usage

Adhering to best practices is essential for maintaining the security of data transfers using SFTP/SCP. This includes robust key management, stringent access control, and regular security audits.Key Management:

- Generate Strong Keys: Use strong, cryptographically secure key pairs (e.g., RSA keys with a minimum of 2048 bits or Ed25519 keys) for SSH key-based authentication.

- Protect Private Keys: Securely store private keys. Avoid storing them on systems with weak security. Consider using hardware security modules (HSMs) or password-protected key stores.

- Key Rotation: Regularly rotate SSH keys to mitigate the impact of potential key compromise. Establish a key rotation policy.

- Key Revocation: Implement a mechanism to revoke compromised keys immediately.

Access Control:

- Principle of Least Privilege: Grant users only the necessary permissions. Restrict access to specific directories and files.

- IP-Based Restrictions: Limit access to the SFTP/SCP server based on source IP addresses. This adds an extra layer of security.

- User Account Management: Regularly review user accounts and remove or disable accounts that are no longer needed.

- Authentication Auditing: Enable logging of all authentication attempts and access events. Regularly review these logs for suspicious activity.

Monitoring and Auditing:

- Enable Logging: Enable detailed logging on the SFTP/SCP server to track all file transfer activities, including uploads, downloads, and modifications.

- Regular Audits: Conduct regular security audits to review configurations, access controls, and logs to identify and address potential security vulnerabilities.

- Integrity Checks: Implement mechanisms to verify the integrity of transferred files. This can involve using checksums (e.g., SHA-256) to ensure data has not been tampered with during transit.

Mitigating Potential Vulnerabilities when using SFTP/SCP

SFTP and SCP, while secure protocols, are susceptible to vulnerabilities if not configured and used correctly. Implementing the following mitigation strategies is crucial to protect data during migration.Vulnerability Mitigation Strategies:

- Man-in-the-Middle (MitM) Attacks: To prevent MitM attacks, verify the server’s host key fingerprint during the initial connection. This ensures you are connecting to the intended server and not an imposter.

- Brute-Force Attacks: Implement SSH key-based authentication to eliminate the reliance on passwords, thereby mitigating the risk of brute-force attacks. If passwords are used, enforce strong password policies and consider rate-limiting login attempts.

- Malware on the Client/Server: Regularly scan both the client and server systems for malware. Ensure that the systems are patched and up to date. Implementing a file integrity monitoring system can also help detect unauthorized file modifications.

- Unencrypted Traffic on the Network: SFTP and SCP encrypt data in transit. Ensure the network itself is secure. Use a VPN if the underlying network is untrusted.

- Misconfigured Permissions: Regularly audit file and directory permissions on the server to ensure that access is restricted appropriately. Avoid overly permissive permissions.

- Vulnerable Software: Keep the SFTP server and SSH software up to date with the latest security patches to address any known vulnerabilities. Regularly scan the system for outdated software.

- Data Loss/Corruption: Implement checksum verification (e.g., SHA-256) to verify the integrity of transferred files and ensure that data is not corrupted during transfer. Implement error handling and retry mechanisms to handle potential network interruptions.

Data Integrity and Authentication

Ensuring data integrity and authenticating both the source and destination are crucial during data migration. Compromises in either area can lead to data corruption, unauthorized access, and a complete loss of trust in the migration process. Rigorous methods for verification and authentication are therefore essential components of any secure data migration strategy.

Importance of Data Integrity During Migration

Data integrity refers to the assurance that data remains unaltered and consistent throughout its lifecycle, including during migration. Loss of data integrity can manifest in various ways, from subtle corruption of individual data points to complete data loss. The consequences can be severe, including incorrect business decisions, regulatory non-compliance, and reputational damage.

Methods for Verifying Data Integrity During Migration

Data integrity verification relies on techniques to detect and prevent data alteration during transit. These techniques create a digital fingerprint of the data at the source and verify it against the destination to ensure consistency.

- Checksums: Checksums are values calculated from a block of data, serving as a digital fingerprint. They are generated at the source and recalculated at the destination after the data transfer. If the checksums match, it indicates that the data has not been altered. Commonly used checksum algorithms include CRC32 and MD5. However, these are considered cryptographically weak for some applications, and more robust options like SHA-256 or SHA-3 are often preferred for critical data.

- Hashing: Hashing functions, such as SHA-256 or SHA-3, produce unique, fixed-size hash values for any input data. Any change to the input data, no matter how small, will result in a drastically different hash value. This property makes hashing highly effective for detecting data corruption. The hash value is generated at the source and compared with the hash value generated at the destination after data transfer.

- Digital Signatures: Digital signatures, based on public-key cryptography, provide a more robust method of verifying data integrity and authenticity. A digital signature is created by encrypting a hash of the data with the sender’s private key. The recipient can then use the sender’s public key to decrypt the signature and verify the hash. This confirms that the data originated from the sender and has not been tampered with.

- Data Validation Rules: Implementing data validation rules within the migration process can further enhance data integrity. These rules check the data against predefined constraints, such as data types, allowed values, and format requirements. These rules help identify and reject corrupted or invalid data during migration, preventing it from being stored in the destination system.

Methods for Authenticating Data Sources and Destinations

Authentication ensures that only authorized sources and destinations are involved in the data migration process. This prevents unauthorized access and potential data breaches.

- User Authentication: This involves verifying the identity of users or systems initiating the data migration. Common methods include usernames and passwords, multi-factor authentication (MFA), and digital certificates. Access control lists (ACLs) and role-based access control (RBAC) are then used to grant permissions based on verified identities.

- System Authentication: Systems involved in the migration process, such as servers and databases, also need to be authenticated. This can be achieved using cryptographic keys, digital certificates, or shared secrets. Mutual authentication, where both the source and destination authenticate each other, provides an added layer of security.

- Network Segmentation: Isolating the data migration network from other networks reduces the attack surface. This can be achieved using virtual LANs (VLANs) or network firewalls, restricting access to only authorized systems and services.

- Regular Auditing and Logging: Comprehensive logging of all migration activities, including source and destination IP addresses, timestamps, and data transfer details, is critical for monitoring and detecting unauthorized access or suspicious activity. These logs should be regularly reviewed and audited to identify potential security breaches.

Implementing Multi-Factor Authentication (MFA) for Data Migration Processes

Multi-factor authentication (MFA) significantly enhances security by requiring users to provide multiple forms of verification. This makes it much harder for unauthorized users to gain access, even if their password is compromised.

- Types of MFA Factors: MFA typically involves at least two of the following factors:

- Something you know: Passwords, PINs, security questions.

- Something you have: Security tokens, hardware keys, mobile devices.

- Something you are: Biometric data, such as fingerprints or facial recognition.

- MFA Implementation Strategies:

- For user logins: Enforce MFA for all user accounts involved in the data migration process. This can be achieved using cloud-based identity providers, such as Azure Active Directory or Okta, or by integrating MFA solutions into the organization’s existing authentication infrastructure.

- For API access: If the data migration process involves APIs, implement MFA for API keys or service accounts used to access the data.

- For administrative access: Secure administrative access to data migration tools and systems with MFA to prevent unauthorized configuration changes or data manipulation.

- Example: A data migration process involving a cloud-based data warehouse might require users to log in with their username, password, and a one-time code generated by an authenticator app on their mobile device. Additionally, access to the data warehouse might be restricted to specific IP addresses or network segments, adding another layer of security.

Network Segmentation and Isolation

Network segmentation and isolation are critical strategies for bolstering security during data migration. By dividing a network into distinct, logically separated segments, organizations can limit the impact of security breaches, control access, and enforce granular security policies. This approach effectively confines potential threats, preventing them from traversing the entire network and compromising sensitive data. This proactive measure is especially crucial during data migration, a process inherently vulnerable to attack vectors.

Concept of Network Segmentation and Security Improvement

Network segmentation involves dividing a network into smaller, isolated segments, each with its own security controls. This architectural design enhances security by creating boundaries that restrict the lateral movement of threats. If a segment is compromised, the attacker’s access is limited to that segment, preventing them from reaching other critical resources. During data migration, this principle is paramount, as it protects the migrating data and the existing infrastructure.

Steps for Segmenting the Network to Isolate Data Migration Traffic

Implementing network segmentation for data migration requires a methodical approach. The goal is to create a secure, isolated environment for the migration process. This involves several key steps:

- Identify Sensitive Data and Assets: Determine the scope of the migration, including the data and systems involved. Categorize data based on sensitivity levels and regulatory requirements (e.g., PII, PHI, financial data).

- Design the Network Segments: Define the segments based on data sensitivity, functional requirements, and security needs. Consider creating a dedicated migration segment to isolate the migration traffic. This segment should only contain the source and destination systems and the necessary migration tools.

- Implement Network Devices: Configure firewalls, routers, and switches to enforce segmentation. Firewalls act as gatekeepers, controlling traffic flow between segments based on predefined rules. Routers direct traffic between segments, and switches facilitate communication within a segment.

- Configure Access Controls: Implement strict access controls to restrict access to the migration segment. Only authorized personnel should have access to the migration tools and data. Use role-based access control (RBAC) to grant access based on job function and need-to-know principles.

- Monitor and Audit: Implement comprehensive monitoring and auditing to track network traffic, identify security incidents, and ensure compliance. Log all network activity, including access attempts, data transfers, and configuration changes. Regularly review logs and audit trails to detect and respond to potential threats.

Best Practices for Network Segmentation in a Migration Environment

Adhering to best practices is crucial for the effective implementation of network segmentation during data migration.

- Least Privilege Principle: Grant users and systems only the minimum necessary access to perform their tasks. This limits the potential damage from compromised accounts.

- Regular Security Audits: Conduct regular security audits to assess the effectiveness of the segmentation strategy and identify vulnerabilities.

- Network Intrusion Detection and Prevention Systems (IDS/IPS): Deploy IDS/IPS within each segment to detect and prevent malicious activity.

- Up-to-Date Security Patches: Keep all systems and network devices patched with the latest security updates.

- Strong Authentication and Authorization: Enforce strong authentication mechanisms, such as multi-factor authentication (MFA), to verify user identities.

- Documentation: Maintain detailed documentation of the network segmentation design, security policies, and access controls.

Benefits of Using a Dedicated Migration Network

A dedicated migration network provides significant advantages in terms of security and efficiency. This isolated environment minimizes the risk of data breaches and ensures that the migration process does not interfere with existing network operations.

- Enhanced Security: Isolating the migration traffic reduces the attack surface and limits the impact of potential breaches. Any compromise within the migration network is contained, preventing attackers from accessing production systems.

- Improved Performance: A dedicated network ensures that the migration process does not compete with other network traffic, leading to faster data transfer speeds.

- Simplified Management: A dedicated migration network simplifies the management of security policies and access controls.

- Reduced Risk of Downtime: Isolating the migration process reduces the risk of disrupting production systems and minimizes the potential for downtime.

- Compliance with Regulations: Using a dedicated migration network can help organizations comply with regulatory requirements, such as those related to data privacy and security (e.g., GDPR, HIPAA).

Monitoring and Logging for Security

Data migration, while a critical process for modern organizations, introduces significant security risks. Comprehensive monitoring and logging are paramount for mitigating these risks, enabling real-time threat detection, incident response, and post-migration forensic analysis. A robust monitoring and logging strategy provides the visibility needed to ensure data confidentiality, integrity, and availability throughout the migration lifecycle.

Importance of Monitoring and Logging

Monitoring and logging serve as the bedrock of a proactive security posture during data migration. They provide real-time insights into system behavior, facilitating early detection of anomalies and potential security breaches. This allows security teams to swiftly respond to incidents, minimizing the impact of attacks and ensuring data integrity. Effective logging also supports compliance efforts by providing an audit trail of all activities related to the migration process, demonstrating adherence to regulatory requirements.

Furthermore, the data gathered through monitoring and logging is invaluable for post-migration analysis, enabling organizations to identify vulnerabilities, refine security controls, and improve their overall security posture.

Critical Events to Monitor During a Data Migration

A well-defined monitoring strategy encompasses the tracking of specific events that could indicate a security compromise or operational issue. Careful selection of the events to be monitored allows security teams to focus on the most critical areas, reducing noise and improving the efficiency of threat detection.

- Authentication Failures: Monitoring unsuccessful login attempts is essential to detect brute-force attacks or unauthorized access attempts. Elevated failure rates, especially from unusual locations or at odd hours, warrant immediate investigation.

- Unauthorized Access Attempts: Tracking attempts to access sensitive data or systems that a user is not authorized to use is crucial. This includes attempts to access data outside of defined migration scopes or attempts to modify access control lists.

- Data Transfer Volumes and Rates: Unexpected spikes or drops in data transfer volumes can indicate data exfiltration or performance bottlenecks. Establishing baseline transfer rates and setting alerts for deviations is critical.

- Changes to Security Configurations: Monitoring modifications to firewall rules, access control lists, and other security settings helps identify unauthorized changes that could compromise the security of the migration process.

- Unusual Network Activity: Monitoring network traffic for anomalous patterns, such as connections to suspicious IP addresses or unusual port usage, is essential. This includes monitoring for traffic patterns indicative of malware communication.

- System Resource Utilization: Monitoring CPU usage, memory consumption, and disk I/O helps identify performance bottlenecks and potential denial-of-service attacks. Sudden spikes in resource utilization can signal malicious activity.

- File Integrity Changes: Monitoring for unexpected modifications to critical files, such as system binaries or configuration files, can indicate malware infection or unauthorized tampering.

- Error Logs and System Events: Monitoring system logs for errors, warnings, and other events provides insights into potential operational issues or security vulnerabilities. Pay close attention to events related to file access, network connections, and system processes.

Examples of Security Logs and Interpretation

Security logs are the primary source of information for detecting and responding to security incidents. Understanding the format and content of these logs is critical for effective analysis.

- Authentication Logs: These logs record all login attempts, including successful and failed attempts. A typical authentication log entry might include the timestamp, username, source IP address, and status (success/failure). Analyzing these logs can reveal brute-force attacks, compromised credentials, and unauthorized access attempts.

- Network Logs: Network logs capture information about network traffic, including source and destination IP addresses, ports, protocols, and data volumes. Analyzing network logs can reveal suspicious network activity, such as connections to malicious IP addresses or unusual communication patterns. For example, an entry showing a large data transfer to an external IP address at an unusual time could indicate data exfiltration.

- System Logs: System logs record events related to system processes, resource utilization, and errors. Analyzing system logs can help identify performance bottlenecks, system failures, and potential security vulnerabilities. A system log entry indicating a process crash or an unexpected error message might indicate a security issue.

- Access Logs: Access logs record attempts to access specific files, directories, or resources. Analyzing access logs can reveal unauthorized access attempts and data breaches. For example, an entry showing an attempt to access a sensitive file by an unauthorized user should be investigated immediately.

Consider this example of a failed login attempt recorded in an authentication log:

-10-27 14:35:00 UTC, Failed Login, user=john.doe, source_ip=192.168.1.100, reason=invalid password

This log entry indicates that a user, john.doe, attempted to log in from the IP address 192.168.1.100 and failed due to an incorrect password. Multiple failed login attempts from the same IP address within a short period could indicate a brute-force attack.

Designing a System for Alerting on Security Breaches

A robust alerting system is essential for responding to security incidents in a timely manner. The system should be designed to automatically detect and notify security teams of suspicious activity.

- Log Aggregation: Centralize logs from all relevant systems and applications into a single repository. This allows for comprehensive analysis and correlation of events.

- Event Correlation: Implement rules to correlate events across different logs. This can help identify complex attacks that might not be apparent from individual log entries. For example, a rule could trigger an alert if a failed login attempt is followed by an attempt to access a sensitive file.

- Threshold-Based Alerts: Set thresholds for critical events, such as failed login attempts, data transfer volumes, and resource utilization. When a threshold is exceeded, the system should automatically generate an alert.

- Real-Time Monitoring: Implement real-time monitoring of logs and system metrics. This allows for immediate detection of suspicious activity.

- Alert Notification: Configure the system to send alerts to appropriate security personnel via email, SMS, or other communication channels. The alerts should include relevant information about the incident, such as the source IP address, username, and type of event.

- Incident Response Workflow: Define a clear incident response workflow to guide security teams in responding to alerts. This workflow should include steps for investigation, containment, eradication, and recovery.

- Example: If the system detects five failed login attempts within five minutes from a specific IP address, an alert should be triggered. This alert could be sent to the security operations center (SOC) and include details about the IP address, username, and timestamp of the failed attempts.

Data Masking and Anonymization Techniques

Data masking and anonymization are crucial elements in safeguarding sensitive information during data migration processes. They serve to mitigate the risks associated with data breaches and unauthorized access by obscuring or removing identifiable data, thereby preserving privacy and compliance with regulations such as GDPR and CCPA. These techniques are particularly vital when migrating data across different environments, especially when those environments have varying levels of security or are accessible to third parties.

Effective implementation requires careful planning and the selection of appropriate methods based on the specific data types and the intended use of the data post-migration.

Purpose of Data Masking and Anonymization in Data Migration

Data masking and anonymization fulfill distinct yet complementary roles in the data migration lifecycle. Data masking transforms sensitive data into a non-sensitive format, such as replacing real names with fictitious ones or partially redacting credit card numbers. Anonymization, on the other hand, goes a step further by irreversibly removing or altering data in a way that prevents the identification of individuals.

Both techniques are designed to reduce the risk of exposing sensitive information during migration, minimizing the potential for data breaches and ensuring compliance with data privacy regulations. The choice between masking and anonymization often depends on the intended use of the data in the target environment; masked data can still be useful for testing and development purposes, while anonymized data is essential when data is to be shared publicly or used for research.

Methods for Masking Sensitive Data During Migration

Several methods can be employed to mask sensitive data effectively during the migration process. These techniques vary in their complexity and the degree to which they obscure the original data.

- Substitution: This involves replacing sensitive data with fictitious, but realistic, values. For example, a real customer name could be substituted with a generated name from a name generator, or an actual address could be replaced with a randomly generated, but valid, address. The key to effective substitution is maintaining the format and data type of the original data, which preserves data utility for testing and development.

- Redaction: Redaction entails removing portions of sensitive data. This is commonly used for credit card numbers, where only the last four digits are displayed, while the preceding digits are masked with characters like ‘X’ or ‘*’. Similarly, parts of social security numbers, phone numbers, or other identifying information can be redacted.

- Shuffling: Shuffling, or data scrambling, involves rearranging the values within a specific data field. For example, all the values in the “salary” column might be reordered, assigning each salary to a different employee. This preserves the statistical properties of the data while obscuring the association between an individual and their salary.

- Data Generation: This involves creating synthetic data that mimics the characteristics of the original data. This is particularly useful for creating test datasets when the original data is too sensitive to be migrated. The generated data must reflect the original data’s format, data types, and statistical properties to be useful for testing and development.

Techniques for Anonymizing Data to Protect Privacy

Anonymization goes beyond masking by making it impossible to identify individuals from the data. Several techniques achieve this, offering varying degrees of privacy protection.

- Generalization: Generalization involves reducing the granularity of data by replacing specific values with broader categories. For example, a specific date of birth could be generalized to a year or a decade, or a specific street address could be generalized to a city or a region. This technique reduces the risk of re-identification by making the data less specific.

- Suppression: Suppression involves removing specific data points or entire attributes. This is often used to remove directly identifying information, such as names or social security numbers. Suppression can also be used to remove data points that, in combination with other data, could lead to re-identification.

- Aggregation: Aggregation involves combining individual data points into larger groups or categories. For example, individual sales transactions might be aggregated into monthly or quarterly totals. This technique hides individual data points within a larger dataset, making it more difficult to identify individuals.

- Pseudonymization: Pseudonymization replaces direct identifiers with pseudonyms, which are artificial identifiers. While the original data is not destroyed, the association between the data and the individual is broken. Pseudonyms are often generated using cryptographic hash functions or other techniques that ensure the pseudonym is unique and consistent across different datasets.

Implementation of Data Masking and Anonymization Using Specific Tools or Techniques

Implementing data masking and anonymization requires careful selection of tools and techniques tailored to the specific data and migration requirements.

- Database-Specific Tools: Many database management systems (DBMS) provide built-in features or extensions for data masking and anonymization. For example, Oracle Data Masking and Subsetting and Microsoft SQL Server Dynamic Data Masking offer functionalities for masking data directly within the database during migration. These tools typically allow for defining masking rules based on data types, column names, or other criteria.

- Specialized Data Masking Software: Several commercial software packages specialize in data masking and anonymization. These tools often provide a user-friendly interface for defining masking rules, generating synthetic data, and managing the masking process. Examples include IBM InfoSphere Data Privacy, Delphix, and IRI FieldShield. These tools can support various data sources and targets, making them suitable for complex migration scenarios.

- Scripting Languages and Libraries: Scripting languages like Python, with libraries such as Faker and Pandas, can be used to implement custom data masking and anonymization solutions. This approach offers greater flexibility and control over the masking process, allowing for tailored solutions that meet specific requirements. For instance, a Python script can be written to read data from a source, apply masking rules, and write the masked data to a target.

- Example: Python with Faker Library

A Python script using the Faker library can mask customer names and addresses during a data migration.

import pandas as pd

from faker import Fakerfake = Faker()

# Sample data (replace with your actual data source)

data = ‘customer_name’: [‘Alice Smith’, ‘Bob Johnson’, ‘Charlie Brown’],

‘address’: [‘123 Main St’, ‘456 Oak Ave’, ‘789 Pine Ln’]

df = pd.DataFrame(data)# Mask customer names

df[‘customer_name’] = [fake.name() for _ in range(len(df))]# Mask addresses

df[‘address’] = [fake.address() for _ in range(len(df))]print(df)

This script generates fake names and addresses using the Faker library and replaces the original data with these fictitious values. This demonstrates how to mask sensitive data during the migration process using readily available tools.

Compliance and Regulatory Considerations

Data migration projects must meticulously address compliance and regulatory requirements to avoid legal repercussions, financial penalties, and reputational damage. Ignoring these obligations can result in significant breaches, particularly concerning the handling of sensitive data during its movement. Careful planning and implementation of appropriate safeguards are crucial for a successful and compliant data migration strategy.

Identifying Relevant Compliance Regulations (e.g., GDPR, HIPAA)

Several regulations directly impact data migration, varying based on the data type, industry, and geographical locations involved. Understanding these regulations is fundamental to constructing a compliant migration strategy.

- General Data Protection Regulation (GDPR): GDPR, applicable to organizations processing the personal data of individuals within the European Union (EU), imposes stringent requirements. It mandates data minimization, purpose limitation, and the right to be forgotten, among other obligations. Data migration processes must ensure that personal data is handled in compliance with these principles. For instance, if migrating data containing EU citizens’ personal information to a new system, the migration process must adhere to GDPR principles, including obtaining consent where necessary, providing individuals with access to their data, and ensuring the data is accurate and up-to-date.

- Health Insurance Portability and Accountability Act (HIPAA): HIPAA, applicable in the United States, governs the protection of protected health information (PHI). Covered entities, such as healthcare providers and health plans, must ensure the confidentiality, integrity, and availability of PHI during data migration. This includes implementing technical, physical, and administrative safeguards to protect against unauthorized access, use, or disclosure of PHI. An example is migrating patient records from an older system to a new electronic health record (EHR) system.

This process must involve encryption, access controls, and audit trails to meet HIPAA requirements.

- California Consumer Privacy Act (CCPA): The CCPA, similar to GDPR, grants California consumers rights regarding their personal information, including the right to know, the right to delete, and the right to opt-out of the sale of their personal information. Data migration processes affecting California residents’ data must comply with these rights.

- Payment Card Industry Data Security Standard (PCI DSS): PCI DSS applies to organizations that handle credit card information. During data migration, if credit card data is involved, the migration process must adhere to PCI DSS requirements, including secure data transmission, storage, and access controls. This includes encrypting cardholder data both in transit and at rest.

- Other Industry-Specific Regulations: Depending on the industry, other regulations may apply, such as the Gramm-Leach-Bliley Act (GLBA) for financial institutions, which requires protecting customer financial information, and the Family Educational Rights and Privacy Act (FERPA) for educational institutions, which protects student educational records.

Ensuring Data Migration Processes Comply with Regulations

Compliance necessitates a proactive and structured approach, integrating regulatory requirements into the entire data migration lifecycle. This involves careful planning, implementation of security measures, and ongoing monitoring.

- Data Inventory and Classification: Before migration, identify and classify all data to determine its sensitivity and the applicable regulations. This allows for tailoring security measures to the specific data types. For instance, if migrating both public and private data, the private data will require more stringent security controls.

- Data Mapping and Transformation: Map data fields and transform data formats as needed. Ensure that transformations do not compromise data integrity or regulatory compliance. For example, when migrating data between different database systems, ensure data types are compatible, and sensitive data is properly encrypted during transformation.

- Data Encryption: Implement encryption at rest and in transit to protect data confidentiality. Use strong encryption algorithms and key management practices. Consider using encryption for all sensitive data, such as personal identifiers, financial information, or health records.

- Access Controls: Implement robust access controls to restrict data access to authorized personnel only. Apply the principle of least privilege. This includes using multi-factor authentication and regularly reviewing access permissions.

- Secure Data Transfer Protocols: Use secure protocols such as TLS/SSL, SFTP, or VPNs for data transfer. These protocols encrypt data in transit, protecting it from eavesdropping and unauthorized access.

- Data Retention and Disposal: Establish data retention policies that align with regulatory requirements. Securely dispose of data when it is no longer needed. This may involve data erasure or physical destruction of storage media.

- Regular Audits and Monitoring: Conduct regular audits and monitoring to ensure compliance with regulations and the effectiveness of security measures. Monitor logs for any unusual activity or security breaches.

Providing a Checklist for Assessing Regulatory Compliance During Data Migration

A comprehensive checklist can help organizations systematically assess and ensure regulatory compliance during data migration.

- Data Discovery and Classification:

- Has all data been identified and classified according to sensitivity?

- Are applicable regulations for each data type identified?

- Legal and Contractual Review:

- Have all relevant contracts and agreements been reviewed for data migration requirements?

- Are data processing agreements in place with any third-party vendors?

- Data Security Measures:

- Is data encrypted at rest and in transit?

- Are access controls and authentication mechanisms in place?

- Are secure data transfer protocols being used?

- Are audit trails and logging enabled?

- Data Transformation and Migration Processes:

- Are data transformations compliant with regulatory requirements?

- Are data integrity checks in place to ensure data accuracy?

- Data Retention and Disposal:

- Are data retention policies defined and documented?

- Is data securely disposed of when no longer needed?

- Documentation and Training:

- Is the data migration process documented?

- Have relevant personnel received training on data security and compliance?

- Risk Assessment and Mitigation:

- Has a risk assessment been conducted to identify potential vulnerabilities?

- Are mitigation strategies in place to address identified risks?

Sharing How to Document Data Migration Processes for Compliance Purposes

Comprehensive documentation is essential for demonstrating compliance, facilitating audits, and enabling efficient troubleshooting. This documentation should cover all aspects of the data migration process.

- Data Migration Plan: This document should Artikel the scope, objectives, and timeline of the migration project. It should also detail the data sources, destinations, and migration methods.

- Data Inventory and Classification: A detailed inventory of all data, along with its classification based on sensitivity and regulatory requirements.

- Data Mapping and Transformation Specifications: Documentation detailing how data fields are mapped and transformed during the migration process.

- Security Measures Implemented: A description of all security measures implemented, including encryption methods, access controls, and secure data transfer protocols.

- Access Control Lists (ACLs): Documentation outlining the access controls implemented, specifying who has access to what data and systems.

- Audit Logs: Documentation of audit logs, detailing all activities performed during the data migration process, including user actions, data modifications, and system events.

- Testing and Validation Reports: Reports summarizing the results of data migration testing and validation, demonstrating that data integrity and accuracy have been maintained.

- Incident Response Plan: A documented plan outlining how to respond to security incidents or data breaches during or after the data migration.

- Training Records: Records of training provided to personnel involved in the data migration, demonstrating that they are aware of their responsibilities and the security measures in place.

- Data Retention and Disposal Policies: Documentation outlining data retention and disposal policies, including how data is stored, archived, and securely deleted.

Ultimate Conclusion

In conclusion, securing data in transit during migration requires a multi-layered approach that encompasses encryption, secure protocols, robust network design, and vigilant monitoring. By implementing the strategies Artikeld in this guide, organizations can significantly reduce the risk of data breaches, maintain data integrity, and comply with relevant regulations. The journey of data migration, while complex, can be navigated securely with a proactive and informed approach to data protection.

Continuous evaluation and adaptation of security measures are essential to address evolving threats and ensure the long-term protection of valuable data assets.

User Queries

What is the primary difference between symmetric and asymmetric encryption in data migration?

Symmetric encryption uses a single key for both encryption and decryption, offering faster performance but requiring secure key exchange. Asymmetric encryption uses separate keys for encryption and decryption (public and private), providing enhanced security but generally slower processing speeds.

How does network segmentation contribute to data security during migration?

Network segmentation isolates data migration traffic from other network segments, limiting the impact of a potential breach. This prevents attackers from accessing other sensitive data if they compromise the migration process.